Hearing (Audition)

Some of the most well-known celebrities and top earners in the world are musicians. Our worship of musicians may seem silly when you consider that all they are doing is vibrating the air a certain way to create sound waves, the physical stimulus for audition.

People are capable of getting a large amount of information from the basic qualities of sound waves. The amplitude (or intensity) of a sound wave codes for the loudness of a stimulus; higher amplitude sound waves result in louder sounds. The pitch of a stimulus is coded in the frequency of a sound wave; higher frequency sounds are higher pitched. We can also gauge the quality, or timbre, of a sound by the complexity of the sound wave. This allows us to tell the difference between bright and dull sounds as well as natural and synthesized instruments (Välimäki & Takala, 1996).

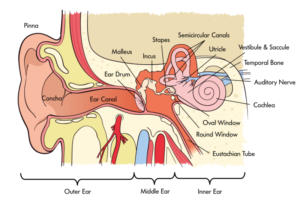

In order for us to sense sound waves from our environment they must reach our inner ear. Lucky for us, we have evolved tools that allow those waves to be funneled and amplified during this journey. Initially, sound waves are funneled by your pinna (the external part of your ear that you can actually see) into your auditory canal (the hole you stick Q-tips into despite the box advising against it). During their journey, sound waves eventually reach a thin, stretched membrane called the tympanic membrane (eardrum), which vibrates against the three smallest bones in the body—the malleus (hammer), the incus (anvil), and the stapes (stirrup)—collectively called the ossicles. Both the tympanic membrane and the ossicles amplify the sound waves before they enter the fluid-filled cochlea, a snail-shell-like bone structure containing auditory hair cells arranged on the basilar membrane (see Figure 4) according to the frequency they respond to (called tonotopic organization). Depending on age, humans can normally detect sounds between 20 Hz and 20 kHz. It is inside the cochlea that sound waves are converted into an electrical message.

Because we have an ear on each side of our head, we are capable of localizing sound in 3D space pretty well (in the same way that having two eyes produces 3D vision). Have you ever dropped something on the floor without seeing where it went? Did you notice that you were somewhat capable of locating this object based on the sound it made when it hit the ground? We can reliably locate something based on which ear receives the sound first. What about the height of a sound? If both ears receive a sound at the same time, how are we capable of localizing sound vertically? Research in cats (Populin & Yin, 1998) and humans (Middlebrooks & Green, 1991) has pointed to differences in the quality of sound waves depending on vertical positioning.

After being processed by auditory hair cells, electrical signals are sent through the cochlear nerve (a division of the vestibulocochlear nerve) to the thalamus, and then the primary auditory cortex of the temporal lobe. Interestingly, the tonotopic organization of the cochlea is maintained in this area of the cortex (Merzenich, Knight, & Roth, 1975; Romani, Williamson, & Kaufman, 1982). However, the role of the primary auditory cortex in processing the wide range of features of sound is still being explored (Walker, Bizley, & Schnupp, 2011).

Balance and the vestibular system

The inner ear isn’t only involved in hearing; it’s also associated with our ability to balance and detect where we are in space. The vestibular system is comprised of three semicircular canals—fluid-filled bone structures containing cells that respond to changes in the head’s orientation in space. Information from the vestibular system is sent through the vestibular nerve (the other division of the vestibulocochlear nerve) to muscles involved in the movement of our eyes, neck, and other parts of our body. This information allows us to maintain our gaze on an object while we are in motion. Disturbances in the vestibular system can result in issues with balance, including vertigo.