Main Body

14 LIGHT

p. 44

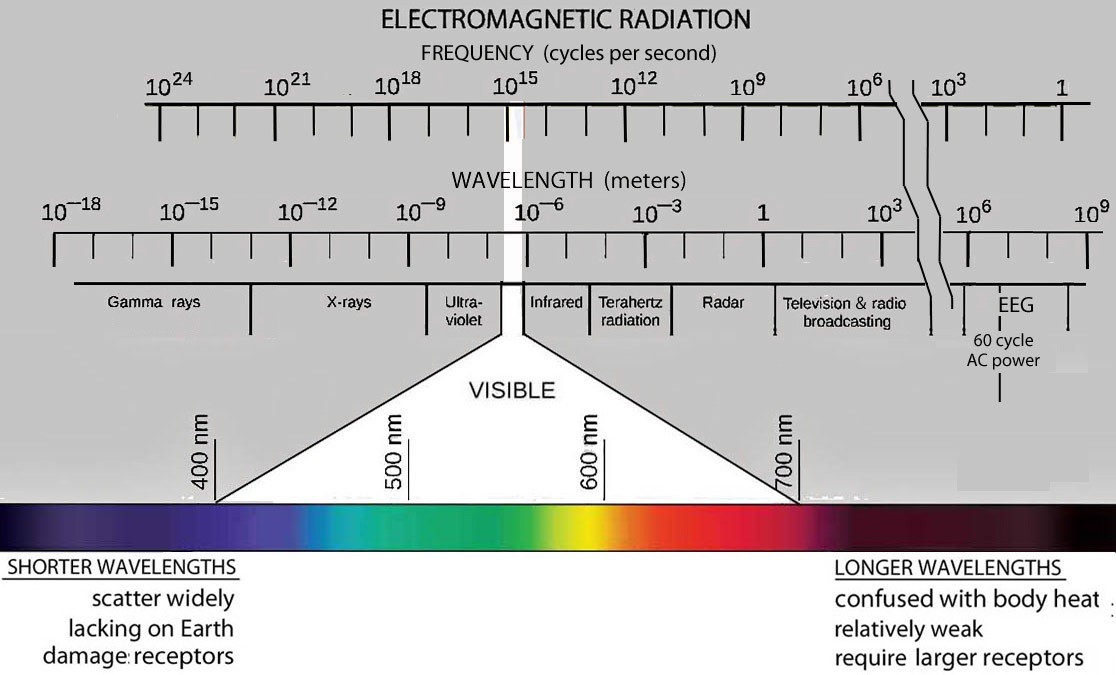

Most of the electromagnetic radiation available on earth comes from the sun:

At sea level, radiation below 400 nm (ultraviolet) and above 750 nm (infrared) is reduced due to absorption by water molecules in the atmosphere.

The sun’s stark white appearance through a light overcast sky reveals its true color above the atmosphere.

The clouds reflect all wavelengths uniformly. This overrides the scattering of the short wavelength photons amongst the light that gets directly through the cloud cover.

p. 45

Given the abundance of radiation in the 400 to 700 nm range, it follows that receptors evolved specifically to detect radiation in this range. Several other factors listed below contribute to making this a “Goldilocks” range for vision – the radiation we call light.

p. 46

A Little History

From agriculture to manufacturing, from work to recreation most of life’s activities depend on light. Yet not till the 19th century did realization arise about the advantages to be gained from measuring light. It began with profiteering from government allowances for the lighting of Bavarian work houses by a former Loyalist spy in the American Revolution, Benjamin Thompson. Inventing a photometer in 1793, enabled him to develop more efficient lamps and pocket the savings on lamp oil.9

(Nonetheless, his research on heat as energy and led to co-founding the Royal Institute in London. There an assistant named Michael Faraday discovered the interaction of electricity and magnetism. Faraday’s student, James Clerk Maxwell then developed wave equations that unified light, electricity and magnetism in 1865. These equations describe the basis of the technology that has revolutionized our lives and understanding of the universe. On the other hand, Thompson’s insight into measuring brightness led the way into an even greater mystery: His measurement of brightness was one of the earliest quantitative steps to studying consciousness.)

That the effectiveness of light depends on its brightness is intuitively obvious. Everyone knows we see better with more light in dim conditions. Yet Thompson recognized that brightness was a subjective phenomenon. It changed depending on both the observer and the conditions of observation. How can something like that be reliably measured? By matched the brightness of two sources, Thompson’s photometer canceled the subjective aspects. Changing the distance of one source precisely varied its brightness according to the inverse square law (p.15). The distances at which they matched then provided a quantitative measurement of their relative brightness.

p.47

Today we have easier ways to vary the energy emitted by lamps, but Thompson’s matching method is still the basis of how brightness is defined. He recognized that matches depended on both the observer’s sensitivity and variations in the candles and lamps. These problems could be solved by always comparing various lamps with the same light source – a standard source. That would provide an absolute scale of measurement.

Thompson tired various types of candles and lamp fuels as standards; even gave the moon a shot.Since then flames from several types of candles, lamps, and later electric incandescent bulbs were used. In 1948 an electric incandescent source was widely adopted: the intensity (technical definition comes later, page 75) of light from a tiny window to thorium dioxide glowing at 2042oK. While closely matching a former candle, it was named the candela to avoid confusion.More recently this has been replaced by a standard detector for light.

One might think that a standard detector enables measuring brightness without having to compare the brightness of an unknown light to a standard. This can work, but first another problem with photons must be solved: